Understanding BER measurements

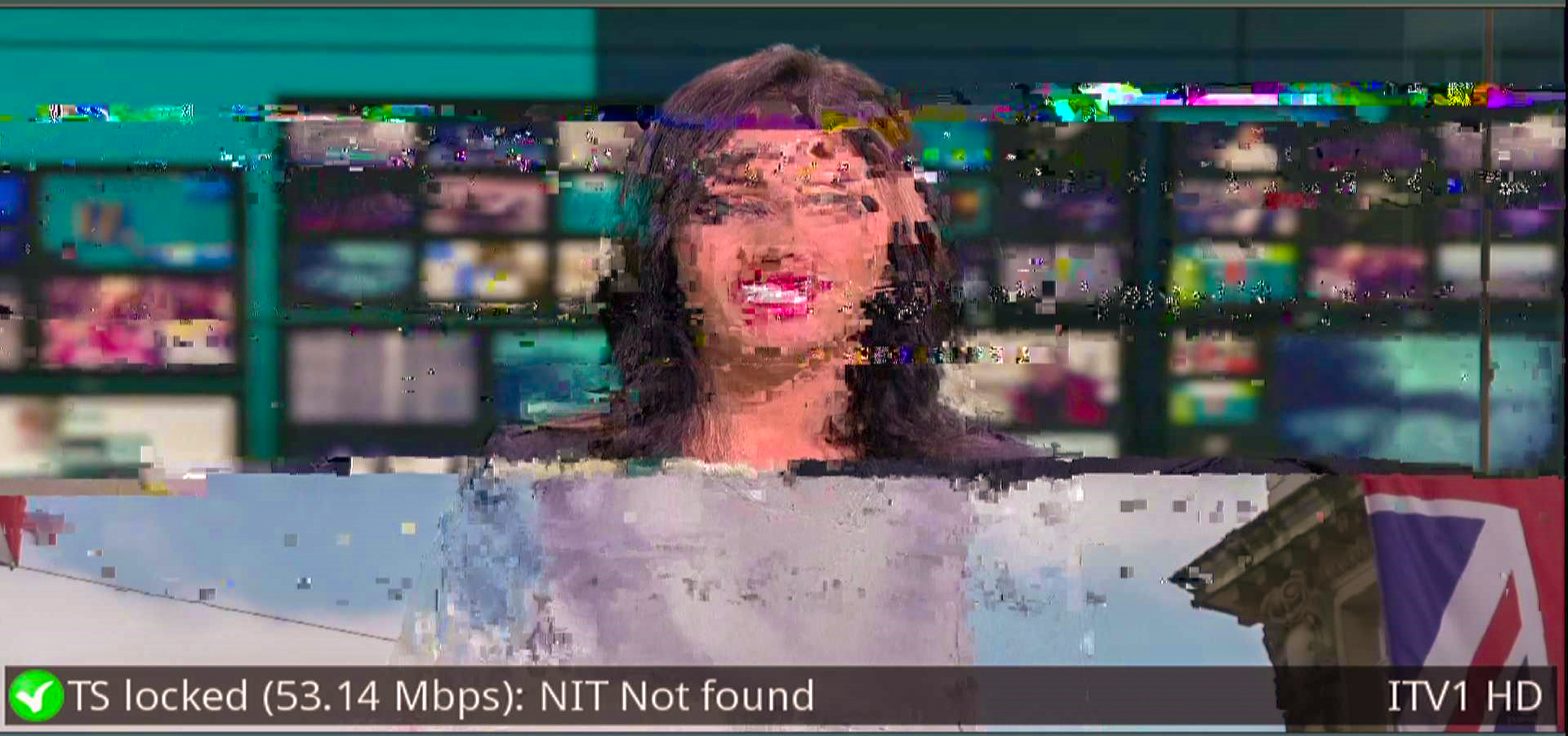

Digital television signals are subject to several types of impairments, such as noise, interference, and attenuation. These impairments can cause errors in the received signal, which can affect the quality of the television viewing experience. BER measurements provide a quantitative measure of the quality of the signal and can help identify the cause of any issues with the signal.

Bit Error Rate (BER) is an essential parameter for measuring the quality of a digital television signal. It represents the number of errors in the received signal per total number of transmitted bits. A low BER value indicates a high-quality signal, while a high BER value indicates a poor-quality signal.

There are two main methods for measuring BER in digital satellite television: the uncorrected BER method and the corrected BER method. The uncorrected BER method measures the error rate in the received signal without any error correction applied. The corrected BER method measures the error rate after the error correction has been applied measuring the MPEG-2 Transport Stream to determine if the service is Quasi Error Free (QEF).

The uncorrected BER method is used to evaluate the quality of the received signal and to determine the cause of any issues with the signal. This method is useful for identifying the type and severity of impairments in the signal. The corrected BER method is used to evaluate the performance of the error correction system and to determine the effectiveness of the error correction techniques used in the transmission system. Of course, in an IRS environment were looking for the lowest number of errors possible.

BER measurement is typically expressed as a ratio of the number of errors to the total number of transmitted bits. Let us say that one million bits are transmitted, and three bits out of the one million bits received are in error because of some kind of interference between the transmitter and receiver. The BER is calculated by dividing the number of error bits received by the total number of bits transmitted: 3/1,000,000 or 0.000003. We can further express 0.000003 in scientific notation which is the way most BER measurements are displayed on signal analysers.

Scientific notation is nothing more than a shorthand method of expressing large or small numbers. Our example of 0.000003 written as 3×10-6 in scientific notation and is displayed as 3.0E-06 on a typical analyser.

The table below shows a high number of errors to a small number or errors in descending order:

1 error in every 10 bits or 0.1 = 1 x 10-1 or 1.0E-01

1 error in every 100 bits or 0.01 = 1 x 10-2 or 1.0E-02

1 error in every 1,000 bits or 0.001 = 1 x 10-3 or 1.0E-03

1 error in every 10,000 bits or 0.0001 = 1 x 10-4 or 1.0E-04

1 error in every 100,000 bits or 0.00001 = 1 x 10-5 or 1.0E-05

1 error in every 1,000,000 bits or 0.000001 = 1 x 10-6 or 1.0E-06 and so on….

BER measurements are an essential tool for evaluating the quality of digital television signals. By understanding BER measurements, integrated reception system (IRS) engineers can identify signal and fix quality problems ensuring that their customers receive a high-quality viewing experience.